...

Godaddy Opa Lambda Extension Plugin

GO

Go provides a library for opa.

...

opa bench --data rbactest.rego 'data.rbactest.allow'

+-------------------------------------------------+------------+

| samples | 22605 |

| ns/op | 47760 |

| B/op | 6269 |

| allocs/op | 112 |

| histogram_timer_rego_external_resolve_ns_75% | 400 |

| histogram_timer_rego_external_resolve_ns_90% | 500 |

| histogram_timer_rego_external_resolve_ns_95% | 500 |

| histogram_timer_rego_external_resolve_ns_99% | 871 |

| histogram_timer_rego_external_resolve_ns_99.9% | 29394 |

| histogram_timer_rego_external_resolve_ns_99.99% | 29800 |

| histogram_timer_rego_external_resolve_ns_count | 22605 |

| histogram_timer_rego_external_resolve_ns_max | 29800 |

| histogram_timer_rego_external_resolve_ns_mean | 434 |

| histogram_timer_rego_external_resolve_ns_median | 400 |

| histogram_timer_rego_external_resolve_ns_min | 200 |

| histogram_timer_rego_external_resolve_ns_stddev | 1045 |

| histogram_timer_rego_query_eval_ns_75% | 31100 |

| histogram_timer_rego_query_eval_ns_90% | 37210 |

| histogram_timer_rego_query_eval_ns_95% | 47160 |

| histogram_timer_rego_query_eval_ns_99% | 91606 |

| histogram_timer_rego_query_eval_ns_99.9% | 630561 |

| histogram_timer_rego_query_eval_ns_99.99% | 631300 |

| histogram_timer_rego_query_eval_ns_count | 22605 |

| histogram_timer_rego_query_eval_ns_max | 631300 |

| histogram_timer_rego_query_eval_ns_mean | 29182 |

| histogram_timer_rego_query_eval_ns_median | 25300 |

| histogram_timer_rego_query_eval_ns_min | 15200 |

| histogram_timer_rego_query_eval_ns_stddev | 32411 |

+-------------------------------------------------+------------+

OPA & Minio

OPA & MinIO's Access Management Plugin

OPA Sidecar injection

First create a namespace for your apps and enable istio and opa

...

Kafka SSL : Setup with self signed certificate

Kafka & Istio

Integration of Istio with Kafka is limited.

...

Service Mesh and Proxies: Examples for Kafka

Setting up Strimzi bridge with Istio Authorization and ACLs

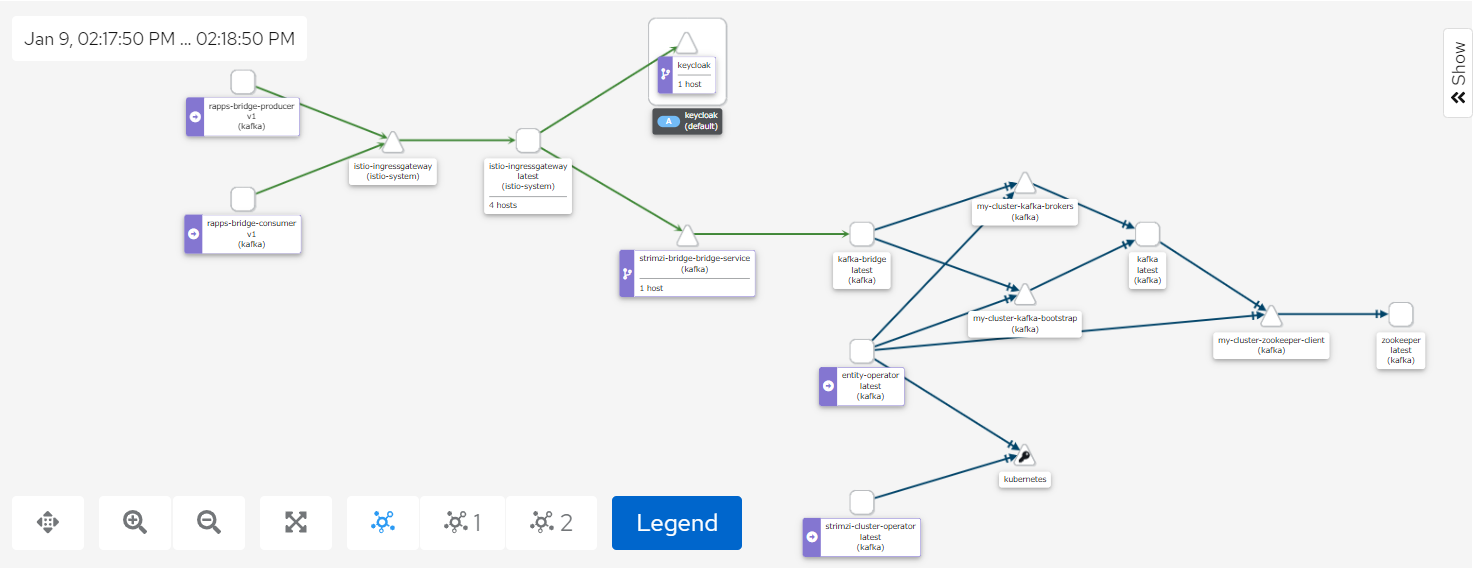

The Kiali screenshot above represents both a producer and a consumer connecting to Kafka through the Strmzi bridge.

Access to the bridge is controlled using JWT which is checked using an Istio AuthorizationPolicy.

| Code Block | ||||

|---|---|---|---|---|

| ||||

apiVersion: "security.istio.io/v1beta1"

kind: "AuthorizationPolicy"

metadata:

name: "kafka-bridge-policy"

namespace: kafka

spec:

selector:

matchLabels:

app.kubernetes.io/name: kafka-bridge

action: ALLOW

rules:

- from:

- source:

requestPrincipals: ["http://keycloak.default:8080/auth/realms/opa", "http://istio-ingressgateway.istio-system:80/auth/realms/opa"]

- to:

- operation:

methods: ["POST", "GET"]

paths: ["/topics", "/topics/*", "/consumers/*"]

when:

- key: request.auth.claims[clientRole]

values: ["opa-client-role"] |

By enabling tls on one of our bootstrap listeners user certificates are automatically created when we create a new KafkaUser

- name: tlsauth

type: internal

port: 9096

tls: true

authentication:

type: tls

Setting the authentication type to tls also ensure secure communication between Kafka and zookeeper.

We also set authorization to simple for the Kafka cluster, this means ACLs will be used.

kafka:

authorization:

type: simple

superUsers:

- CN=kowl

Any users you want to by-pass ACL checks should be listed under superUsers.

We can then setup our bridge user and include the ACL permissions as part of the KafkaUser definition:

| Code Block | ||||

|---|---|---|---|---|

| ||||

apiVersion: kafka.strimzi.io/v1beta1

kind: KafkaUser

metadata:

name: bridge

namespace: kafka

labels:

strimzi.io/cluster: my-cluster

spec:

authentication:

type: tls

authorization:

type: simple

acls:

# access to the topic

- resource:

type: topic

name: my-topic

operations:

- Create

- Describe

- Read

- Write

- AlterConfigs

host: "*"

# access to the group

- resource:

type: group

name: my-group

operations:

- Describe

- Read

host: "*"

# access to the cluster

- resource:

type: cluster

operations:

- Alter

- AlterConfigs

host: "*" |

Authorization between the bridge and Kafka is done using user certificates.

| Code Block | ||||

|---|---|---|---|---|

| ||||

apiVersion: kafka.strimzi.io/v1beta2

kind: KafkaBridge

metadata:

name: strimzi-bridge

namespace: kafka

spec:

replicas: 1

bootstrapServers: my-cluster-kafka-bootstrap:9096

http:

port: 8080

logging:

type: inline

loggers:

logger.bridge.level: "DEBUG"

logger.send.name: "http.openapi.operation.send"

logger.send.level: "DEBUG"

tls:

trustedCertificates:

- secretName: my-cluster-cluster-ca-cert

certificate: ca.crt

authentication:

type: tls

certificateAndKey:

secretName: bridge

certificate: user.crt

key: user.key |

Records can then to be posted to a topic with a call like this:

Endpoint:

http://istio-ingressgateway.istio-system:80/topics/my-topic

Payload: {"records":[{"key":"key-366","value":"value-366"}]}

and read from a topic using the following syntax:

i) Create a consumer group

Endpoint: http://istio-ingressgateway.istio-system:80/consumers/my-group

Payload: {"name":"my-consumer","format":"json","auto.offset.reset":"earliest","enable.auto.commit":false}

ii) subscribe to a topic

Endpoint: http://istio-ingressgateway.istio-system:80/consumers/my-group/instances/my-consumer/subscription

Payload: {"topics":["my-topic"]}

iii) Read records from a topic

Endpoint: http://istio-ingressgateway.istio-system:80/consumers/my-group/instances/my-consumer/records

These calls will be redirected to the bridge using a gateway and a virtual service

| Code Block | ||||

|---|---|---|---|---|

| ||||

apiVersion: networking.istio.io/v1beta1

kind: Gateway

metadata:

name: strimzi-bridge-gateway

namespace: kafka

spec:

selector:

istio: ingressgateway # use Istio gateway implementation

servers:

- port:

number: 80

name: http

protocol: HTTP

hosts:

- "*"

---

apiVersion: networking.istio.io/v1beta1

kind: VirtualService

metadata:

name: strimzi-bridge-vs

namespace: kafka

spec:

hosts:

- "*"

gateways:

- strimzi-bridge-gateway

http:

- name: "strimzi-bridge-routes"

match:

- uri:

prefix: "/topics"

- uri:

prefix: "/consumers"

route:

- destination:

port:

number: 8080

host: strimzi-bridge-bridge-service.kafka.svc.cluster.local |

Note: To use Kowl with this setup you'll need to create a kowl user and configure kowl to use tls

| Code Block | ||||

|---|---|---|---|---|

| ||||

apiVersion: kafka.strimzi.io/v1beta1

kind: KafkaUser

metadata:

name: kowl

namespace: kafka

labels:

strimzi.io/cluster: my-cluster

spec:

authentication:

type: tls |

| Code Block | ||||

|---|---|---|---|---|

| ||||

apiVersion: v1

kind: ConfigMap

metadata:

name: kowl-config-cm

namespace: kafka

data:

config.yaml: |

kafka:

brokers:

- my-cluster-kafka-0.my-cluster-kafka-brokers.kafka.svc:9093

tls:

enabled: true

caFilepath: /etc/strimzi/ca/ca.crt

certFilepath: /etc/strimzi/user-crt/crt/user.crt

keyFilepath: /etc/strimzi/user-key/key/user.key |

- ca.crt is obtained from the my-cluster-cluster-ca-cert secret, user.crt and user.key are obtained from the kowl secret.

Admission Controllers

An admission controller is a piece of code that intercepts requests to the Kubernetes API server prior to persistence of the object, but after the request is authenticated and authorized.

Admission Controllers Reference

Kyverno

Opa Gatekeeper

OPA Gatekeeper: Policy and Governance for Kubernetes